Robotic Searching Gets Smarter

Algorithms that make robots smarter could be applied to search and rescue missions, mine detection, and flaw inspection

From shipwrecks and missing planes to vanished persons, the depths of water can hold many secrets. A common way to search far below its surface is to deploy underwater robots to scour the seabed. But whether or not they find anything, is up to humans to determine.

“A current problem is that we treat search robots like they are blind,” said Todd Murphey, associate professor of mechanical engineering at McCormick School of Engineering. “They often run back and forth over an area to passively record footage, and then the only thing that can judge the utility of the information is the person looking at the video.”

Murphey and his collaborators are developing computational algorithms that make search robots smarter and more autonomous, cutting out the human middleman. Funded by the National Science Foundation and United States Army, this technology has the potential to make search and rescue missions much more efficient and could also be applied to mine detection and flaw inspection in large structures.

Murphey and his collaborators are developing computational algorithms that make search robots smarter and more autonomous, cutting out the human middleman. Funded by the National Science Foundation and United States Army, this technology has the potential to make search and rescue missions much more efficient and could also be applied to mine detection and flaw inspection in large structures.

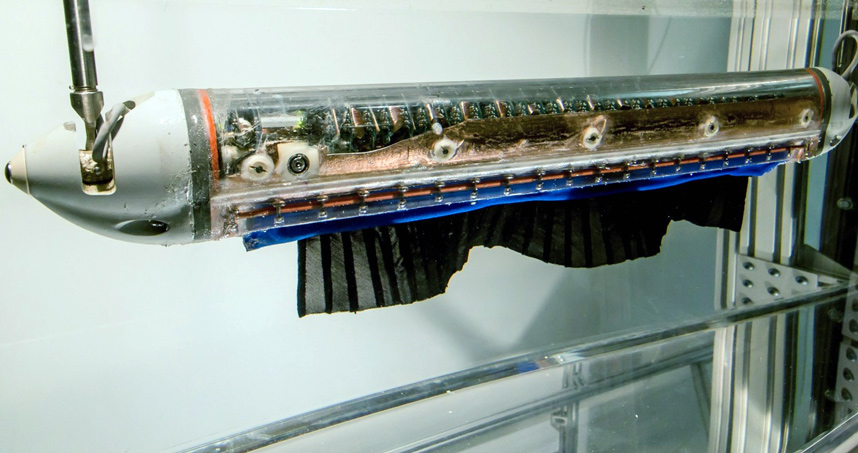

Murphey’s team tested the algorithms on the SensorPod robot, which was developed in the laboratory of collaborator Malcolm MacIver, an associate professor in mechanical engineering and in biomedical engineering. Modeled after the weakly electric black ghost knifefish, MacIver’s SensorPod surveys its area by actively emitting electromagnetic waves and recording their voltage. This electrolocation technique allows the robot to infer objects in its environment.

Along with PhD students Yonatan Silverman and Lauren Miller, the researchers trained the SensorPod to find a Ping Pong ball-sized sphere submerged in its tank. As data is acquired, the robot adapts its motion to the information rather than only moving in back-and-forth motions.

“It doesn’t try to get to a particular spot in the tank,” Murphey said. “It assesses where it might find the object and plans a path through the space that reflects all the areas that might be informative. It constantly updates where it thinks there might be information that will improve its search.”

The robot can be programmed to “know” what it is looking for. This could involve incorporating the characteristics of a submerged airplane, ship, or other objects into its search criteria. According to Murphey, it will search a specified area until it is confident that nothing is there and then move on to the next area.

The robot can be programmed to “know” what it is looking for. This could involve incorporating the characteristics of a submerged airplane, ship, or other objects into its search criteria. According to Murphey, it will search a specified area until it is confident that nothing is there and then move on to the next area.

“It has good judgment and accepts the best solution, but it doesn’t accept it too soon,” he said. “Not accepting it too soon is one of the main things we’ve solved that is unique to our work at Northwestern. You don’t want the robot to be over-reactive and think it has found something when it hasn’t. You want there to be some level of certainty prior to taking any significant action.”

While Murphey’s algorithms are currently being tested in a submerged robot, they are not limited to underwater scenarios. He will test his work next in small model quadrotors, helicopters that are lifted and propelled by four fans. The quadrotors will be trained to search and map the vertical area around his laboratory.

“There is nothing specific about the underwater setting,” he said. “But being underwater is hard in different ways that are helpful to research. If we can get our algorithms to work in water, we can certainly expect them to work on land.”